How a Single Drone Shot is Used to Extract the 3D Orientation of a Circular Antenna

- Vision Elements

- Nov 8, 2022

- 2 min read

Updated: Feb 6, 2023

Case Study

Overview:

Using drone mapping, companies can survey large or tough-to-reach areas for accurate site measurements. This process decreases survey cost and improves turnaround time.

Our client maps antenna poles using drones for the purpose of maintenance and "real-estate" management.

Challenge:

The client needed the 3D orientation of a circular antenna to be extracted from a single drone shot.

Three-dimensional shape reconstructions require stereo images. Which means at least two shots, taken from locations distant from one another, are usually needed. This adds burden to the extraction of the image, but also requires the matching of distinct points on both images. These points are often pretty hard to detect on the edge of the antenna, since they need to be exactly the same on both images.

If the planarity and circular shape of the antenna can be used as a constraint, they can possibly be able to extract the 3D orientation using only a single image. In that case, the automatic detection of points on the edge of the antenna becomes a very easy task since no matching is required, and hence any edge point can be added to strengthen the solution.

Role:

Our solution for this client was the utilization of mathematical constraints which allowed for a significant reduction of complexity in extracting the desired 3D orientation. Using this solution we enabled them to build the proof of concept faster and with more accurate results, than with the original state of the art stereo-based methods.

Approach:

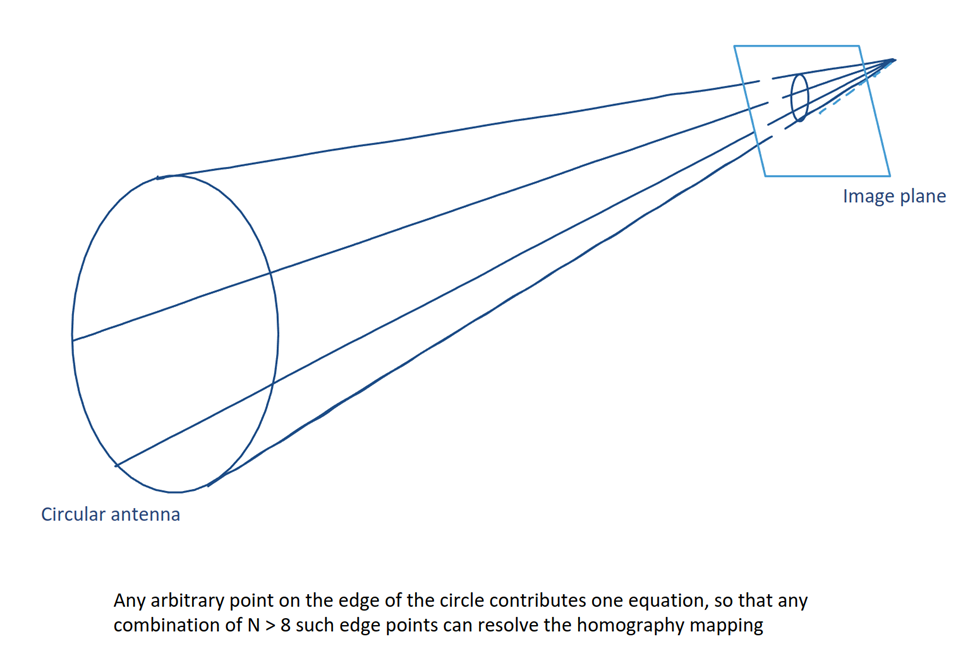

When objects are planar, image warping, aka homography mapping, can be used to mathematically tie 3D points on the object to 2D locations on a single image. Four such point pairs can recover that bijection. But for that to happen the 3D coordinates of each of the four control points need to be known, which is rare and not practical to achieve.

Constraining the circular shape allows us to recover the eight parameters of the homography using eight random point pairs, rather than four unique ones.

Results:

Vision Elements was hired for a short-term engagement to solve a problem. Our computational scientists ended up developing a solution that originally the company didn’t think was possible and would not have arrived to, without our direction.

Problem Solved!

Currently, each antenna image goes through a simple edge detection. Each one of the many edge points is adding one equation, and the total of N > 8 equations is solved with a straightforward least squares scheme, to recover the 3D orientation of the antenna, relative to the camera. Adding the camera-to-world exterior orientation gives the orientation of the antenna in the ‘world’ coordinate system.

Comments